Current fast developments within the AI area have continued to indicate up in information tales, analysis and on-line. Companies in various fields have already included AI into every day enterprise practices. The creation of the primary mainstream, social media-hyped AI program ChatGPT has spurred quite a lot of arguments, nevertheless, corresponding to how and to what excessive AI ought to and shouldn’t be used. Nevertheless, ought to healthcare staff think about using AI? The query is just not a matter of if they need to use it, however a matter of how they need to use it.

HIPAA Compliance Whereas Utilizing AI

AI has already produced quite a lot of privateness scandals. A June 2023 study by IBM estimated the typical price of an information breach throughout industries to be round $4.45 million. Nevertheless, the typical price of a healthcare knowledge breach totaled the very best amongst all industries, at $10.93 million. If a healthcare group plans to make use of AI in on a regular basis practices, it should focus its efforts on the safety of client knowledge via compliance with Well being Insurance coverage Portability and Accountability Act guidelines.

It’s extra essential than ever for healthcare organizations selecting to make use of AI to include a protected and safe technique to retailer and transmit knowledge. Networks connecting sufferers with their care, in addition to any exterior entry factors, needs to be secured. This might appear like knowledge encryption for data that’s each saved and transferred, in addition to ensuring the AI mannequin of alternative is on a safe server.

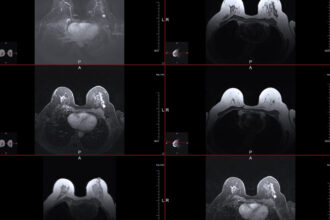

Together with data encryption, as per HIPAA privateness guidelines, data ought to all the time be nameless. Regardless of who has entry to personal well being information, the chosen AI mannequin needs to be skilled on de-identified affected person data. Organizations ought to undertake strategies corresponding to safe harbor, which is predicated on eradicating particular identifiers from the info set and differential privacy, as one attainable resolution for de-identification. Differential privateness entails including statistical noise to knowledge in order that total patterns will be described, however particular person knowledge can’t be extracted. For instance, when a site visitors app appears to be like at the place persons are happening their telephones, it would not wish to retailer the precise location of every particular person of their database to protect client privateness. So, it provides some fuzziness to the info to maintain everybody’s precise location non-public. This manner, the app can nonetheless see total site visitors tendencies with out telling anybody’s particular location. It will reduce any safety dangers in case of knowledge compromise.

One main concern of utilizing AI revolves round knowledge sharing. It will be significant for any healthcare group that chooses to make use of AI to grasp the kind of mannequin getting used and whether or not or not the AI follows the identical data-sharing agreements and affected person consent types. AI functions survive via using algorithms, or a course of or algorithm a pc should comply with to ensure that the AI to work appropriately. Nevertheless, AIs can comply with two types of algorithms: supervised and unsupervised.

Supervised algorithms contain utilizing enter components and output components which might be already recognized upfront. These processes have the power to supply extremely correct algorithms as a result of solutions are already recognized. Unsupervised algorithms, nevertheless, are the alternative. These algorithms contain knowledge being fed into the algorithm whereas the pc learns what to search for. The precise reply is probably not included within the feed, however the laptop should establish relationships and observations within the knowledge. The kind of AI algorithm used will affect the best way a healthcare group should replace its processes to maintain HIPAA compliance.

Organizations ought to take into account limiting who has entry to the AI mannequin to mitigate unintended knowledge breaches or data leaks. Solely sure recognized workers members and first physicians who want entry to sure knowledge ought to have the power to entry the data. Nevertheless, all personnel and distributors needs to be adequately skilled on their entry limitations, knowledge utilization limitations and different safety compliance data on the subject of affected person information.

As a result of this delicate affected person knowledge could also be contained on this AI community, it is necessary the AI fashions undergo common audits and threat assessments. Steady and common auditing not solely strongly enforces HIPAA compliance, but additionally contributes to reliable AI by addressing biases to enhance mannequin accuracy, shield client rights, guarantee knowledge high quality, present relevance and monitor system modifications.

Conclusion

With regards to using AI in a healthcare setting, there are a selection of the way by which these applications can be utilized in a considerate method. Nevertheless, it’s extremely essential to verify the AI is being utilized in a method that meets HIPAA requirements of affected person safety. This not solely aids in higher safety of the affected person, but additionally saves healthcare organizations from pricey knowledge breaches. By means of cautious consideration and use, AI can meet these requirements. However will probably be as much as every healthcare and medical group to verify it follows the compliance requirements wanted to fulfill these tips.