As AI more and more makes its method into our each day lives, there’s little question that its affect on healthcare and drugs will affect everybody, whether or not or not they select to make use of AI for their very own actions. So, how can we be sure that we implement AI in a accountable method that gives principally constructive advantages whereas minimizing the potential downsides?

On the latest 2024 SXSW Convention and Festivals that passed off in March 2024, Dr. Jesse Ehrenfeld, President of the American Medical Affiliation (AMA) spoke on the subject of “AI, Health Care, and the Strange Future of Medicine”. In a followup interview on the AI Today podcast, Dr. Ehrenfeld expands on his discuss, and shares extra insights for this text.

Q: How are you seeing AI affect drugs, and why did AMA not too long ago launch a set of AI rules?

Dr. Jesse Ehrenfeld: I’m a training physician, an anesthesiologist, and in reality I noticed a bunch of sufferers earlier this week. I labored in Milwaukee, Wisconsin, on the Medical Faculty of Wisconsin, and I have been in apply for about 20 years. I am the present president of the AMA, which is a family identify, and the biggest, most influential group representing physicians throughout the nation. Based in 1847, purveyor of the code of medical ethics and many issues to assist physicians apply well being care in America immediately. I am board licensed in each anesthesiology and scientific informatics. I am the primary informatician board licensed to be President of the AMA. It is a comparatively new specialty designation, and I additionally spent ten years within the Navy. Foundationally, the whole lot I do comes all the way down to understanding how we are able to help the supply of top quality medical care to our sufferers, knowledgeable by my work and lively apply.

It will not shock you, however docs have been burdened with a variety of expertise that has simply sucked, not labored, and been a burden not an asset. We simply don’t desire that anymore, particularly with AI. So AMA launched a set of principles for AI development, deployment, and use in November 2023, which is available in response to considerations that we’re listening to by each physicians and the general public.

The general public has a variety of questions on these AI techniques. What do they imply? How can they belief them? Safety, all of these issues. Our rules are guiding all of our work, our engagement with the federal authorities, Congress, the administration, in addition to trade round how we be sure that we set these applied sciences as much as work as they’re developed, deployed, and finally used within the care supply system.

We’ve got been engaged on AI coverage since 2018. However within the newest iteration, we name for a complete authorities method to AI. We have got to be sure that we mitigate danger to sufferers and be sure that we maximize utility. And these rules got here from a variety of work to get subject material specialists collectively, physicians, informaticists, nationwide specialty teams, and there is a lot in these rules.

Q: Are you able to present an summary of these AI rules?

Dr. Jesse Ehrenfeld: Above all else, we wish to be sure that healthcare AI is designed, developed, deployed in a method that’s moral, equitable, accountable ,and clear. Our imaginative and prescient and perspective is that compliance with a nationwide governance coverage is important to develop AI in an moral and accountable method. Voluntary agreements, voluntary compliance will not be adequate. We’d like regulation, and we must always have a danger primarily based method. The extent of scrutiny, of validation oversight should be proportional to the potential for hurt or penalties that an AI system may introduce. So should you’re utilizing it to help analysis versus a scheduling operation, possibly it requires a unique degree of oversight.

We’ve got achieved a variety of survey work of physicians throughout the nation to know what’s taking place in apply immediately as elevated utilization of those applied sciences is occurring in our survey work. The outcomes are thrilling, however I believe it additionally most likely ought to function a little bit of a warning to builders and regulators. Physicians typically are very enthusiastic in regards to the potential of AI in healthcare. 65% of US physicians from a nationally respected pattern see some benefit to utilizing AI of their apply, serving to with documentation, serving to with translating paperwork, aiding with diagnoses, and eliminating administrative burdens by way of automation corresponding to prior authorization.

However in addition they have considerations. 41% of physicians say that they’re equally as enthusiastic about AI as they’re frightened. And there are extra considerations about affected person privateness and the affect on the affected person doctor relationship. On the finish of the day, we would like secure and dependable merchandise within the market. That is how we’ll get the belief of physicians, of shoppers, and clearly all of our work to help the event of top quality, clinically validated AI comes again to those rules.

Q: What are a few of these knowledge and well being privateness considerations you’re specializing in?

Dr. Jesse Ehrenfeld: What I see is extra questions than solutions from sufferers and shoppers on knowledge and AI. For instance, with a well being care app, what does it do? The place does the info go? Can I exploit that info or share it? And sadly, the federal authorities has not likely made certain that there is transparency round the place your knowledge goes. The worst instance of this can be a firm and a developer and an app that they label as “HIPAA compliant”. Within the thoughts of the typical particular person, “HIPAA compliant” implies that their knowledge is secure, non-public, and safe. Nicely, purposes aren’t a coated entity in HIPAA, and HIPAA solely applies to coated entities. So saying you are “HIPAA compliant”while you’re not coated by HIPAA is totally deceptive, and we simply should not enable that sort of factor to occur.

There may be additionally a variety of concern about the place well being knowledge goes, and that extends clearly into AI use with sufferers. 94% of sufferers inform us that they need robust legal guidelines to control the usage of their healthcare knowledge. Sufferers are hesitant to make use of digital instruments if they do not perceive the privateness issues round it. There’s so much that we’ve got to do within the regulatory area. However there’s additionally so much that AI builders can do, even when they are not statutorily required, to strengthen belief in AI knowledge use.

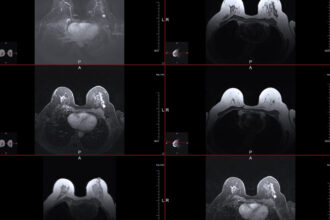

Choose your favourite massive expertise firm. Do you belief them together with your healthcare knowledge? What if there’s a knowledge breach? Would you add a delicate photograph of a physique half to their server to permit it to present you some details about potential circumstances that you simply could be apprehensive about? What do you do when there’s an issue? Who do you name? So I believe there should be alternatives to create extra transparency round the place knowledge assortment goes. How do you decide out of getting your knowledge pooled and shared and so forth and so forth?

Sadly, HIPAA doesn’t resolve all of this. In truth, a variety of these purposes aren’t coated by HIPAA. There must be extra achieved to be sure that we are able to guarantee the security and privateness of healthcare knowledge.

Q: The place and the way do you see AI having probably the most constructive affect on healthcare and drugs?

Dr. Jesse Ehrenfeld: We’ve got to make use of these applied sciences like AI, and we’ll must embrace them if we’re going to resolve the workforce disaster that exists in healthcare immediately. It is a international downside. It is not restricted to the USA. 83 million Individuals shouldn’t have entry to main care. We additionally don’t have sufficient physicians immediately in America. We may by no means open sufficient medical colleges and residency packages to fulfill these calls for if we proceed to work and ship care in the identical method.

After we discuss AI from an AMA lens, we truly like to make use of the time period augmented intelligence, not synthetic intelligence. As a result of it comes again to this foundational precept that the instruments should be simply that, instruments to spice up the capability capabilities of our healthcare groups, physicians, nurses, all people concerned, to have the ability to be simpler, extra environment friendly in delivering the care. What we want, nonetheless, are platforms. Proper now, we have got a variety of one-off level options that do not combine collectively, and that is a route that I believe we’re beginning to see corporations transfer rapidly in the direction of. Clearly, we’re longing for that to occur within the doctor area.

We are attempting a variety of completely different pathways to be sure that we’ve got a voice on the desk all through the design and improvement course of. We have got our doctor innovation community, a free on-line platform to convey doctor entrepreneurs collectively to assist gas change and innovation and produce higher merchandise within the market. Corporations are in search of scientific enter, and clinicians need to get linked with entrepreneurs. We even have a expertise incubator in Silicon Valley referred to as Health2047. They’ve spun off a few dozen corporations powered by insights that we’ve got as physicians within the AMA.

On the finish of the day, it comes down to creating certain that we have a regulatory framework that makes certain that solely clinically validated merchandise are introduced into {the marketplace}. And we have got to be sure that instruments really dwell as much as their promise and that they’re an asset, not a burden.

I do not suppose that AI will exchange physicians, however I do suppose that physicians who use AI will exchange those that do not. AI merchandise have large potential and promise to alleviate administrative burdens that physicians and practices expertise. Finally, I count on there’s going to be a variety of success in ways in which we straight make the most of AI in affected person care. There’s a variety of pleasure there however we have to be sure that we clearly have instruments and applied sciences that tackle challenges round racial bias, errors that may trigger hurt, safety, privateness points, and threats to well being info. Physicians want to know find out how to handle these dangers and find out how to handle legal responsibility earlier than we depend on increasingly instruments.

(disclosure: I’m a co-host of AI As we speak podcast)